Back

AI Agents

Data Access

Business Flows

Productivity

Governance

Use Cases

–

Copilots Raise Productivity. Operating Models Create Outcomes

Reader’s Map, a 4-Part Article Series

Why “we already have Copilots” is true, and still strategically incomplete. Copilots raise productivity; they do not, by themselves, create an execution capability that can safely move regulated work through the institution (Part 1 of 4)

The control deficit. The difference between a helpful assistant and mission-critical AI is not eloquence; it is controllability: governed behavior, evidence, identity, oversight, and reliability under operational stress (Part 2 of 4)

A practical definition of “mission-critical AI.” Five tests that separate AI you can demo from AI you can deploy into regulated value streams (Part 3 of 4)

Use case: commercial onboarding as an agentic flow. Not “AI that drafts emails,” but AI that closes files cleanly, catches exceptions early, and leaves audit-ready evidence behind (Part 4 of 4)

In this article, we will cover Part 1 of 4.

Copilots Raise Productivity. Operating Models Create Outcomes.

Confusing the two is why so many AI transformations stall.

There is a specific moment every leadership team reaches after copilots land in the organization. It often comes after the first internal success stories circulate: analysts write faster, meeting notes are captured automatically, and the “blank page problem” fades. People feel the lift. Budgets look justified. Adoption charts rise. The institution starts to talk about “AI” with a little less anxiety and a little more confidence.

Then someone asks the question that changes the tone in the room: If we already have copilots, why are we still slow? Why does commercial onboarding still drag? Why do underwriting packs still take days to assemble? Why do exceptions still bounce between teams? Why does cycle time in the processes that actually carry P&L and risk feel stubbornly unchanged?

If you’re a business leader, that question isn’t philosophical. It is a test of whether your AI program is a real strategic lever or a modern convenience layered on top of the same old machine. It is also where the most common mistake in enterprise AI shows up: organizations treat copilots as if they were transformation, when they are better understood as a productivity upgrade. Both matter. Only one changes competitiveness.

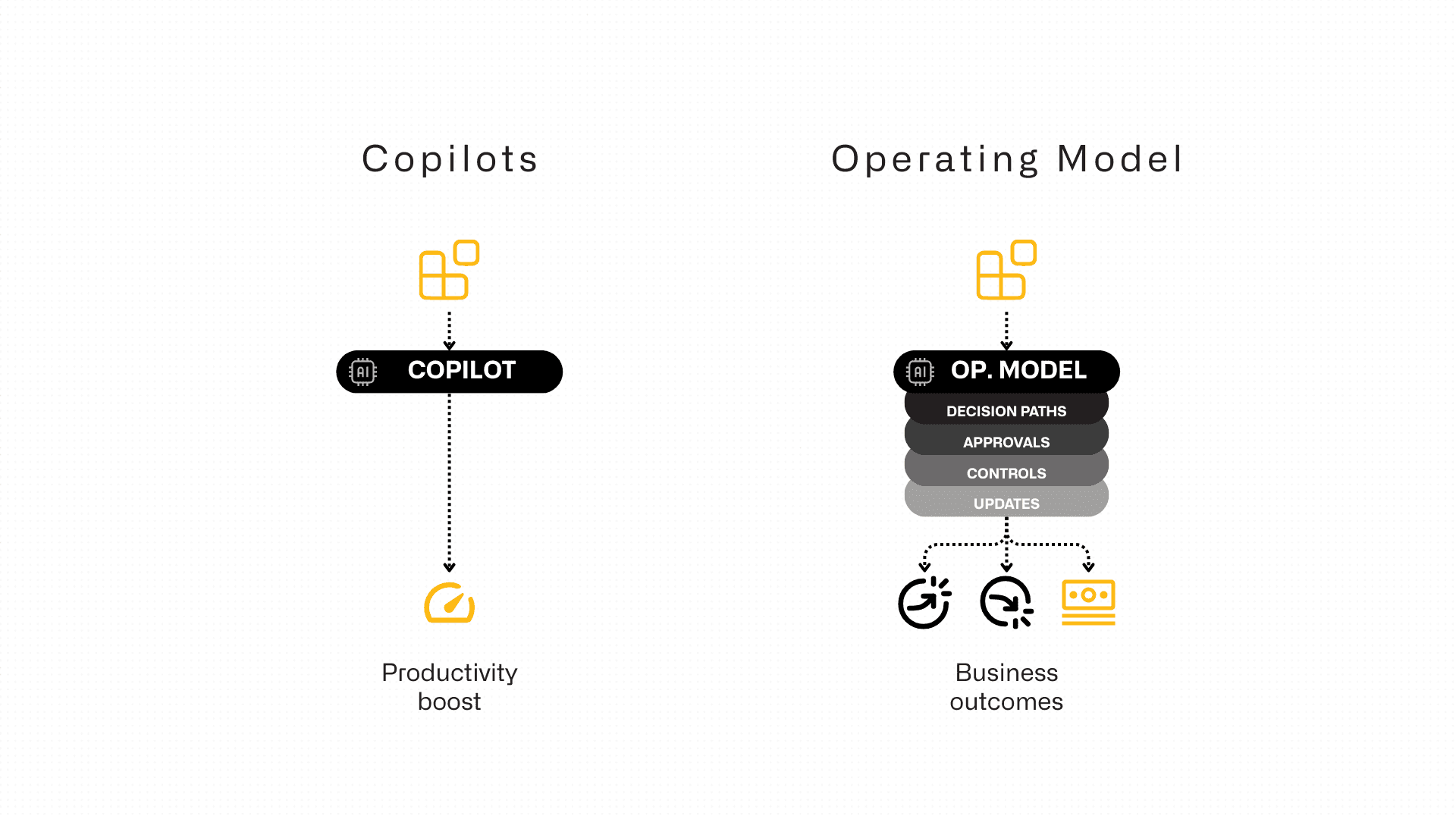

The simplest way to frame the issue is to separate two kinds of speed. Copilots increase individual speed. They help a person draft, summarize, analyze, and communicate more efficiently inside the way work is already done. Operating models determine institutional speed. They control how work moves through approvals, systems, policies, exceptions, and handoffs. In regulated industries, the second kind of speed is what customers feel, what regulators inspect, and what shareholders ultimately pay for.

That is why “we already have copilots” is true, and still strategically incomplete. Copilots are valuable. They are not, by themselves, an execution capability.

The productivity trap: why AI adoption doesn’t automatically become ROI

Copilots are designed to meet people where knowledge work lives: documents, email, meetings, internal collaboration. This is why they spread quickly and why their early benefits are easy to see. A manager reads a sharper brief. A team produces a better deck. A client email becomes more polished. A busy leader gets back time from administrative overhead. The organization becomes more articulate and a little more efficient.

It is also why copilots can create a subtle expectation gap. Once leaders see a system generate coherent language in seconds, it becomes natural to ask why the institution cannot generate outcomes in days. That question is rational and it is where the category error begins. In a bank or insurer, the bottleneck in core value streams is rarely the ability to write or summarize. The bottleneck is the work that sits between intent and completion: validation, exception handling, evidence gathering, approvals, entitlements, and the choreography across systems of record.

A productivity tool can improve how well teams navigate those constraints. It does not dissolve the constraints. This is why many AI programs feel transformational in week two and merely helpful in month six. They improve the human layer, but the machine underneath stays the same.

If leadership wants a clean diagnostic, it is this: are we using AI to help people explain and manage operational complexity, or are we using AI to reduce operational complexity itself? The first produces better communication. The second produces better economics.

Copilots vs. operating model: a contrast leaders can use

A useful way to keep the conversation grounded is to make the contrast explicit, because the two often get blended in steering committees.

An AI copilot improves the throughput of individuals. It excels at turning scattered inputs into clear outputs, which is valuable in any organization where information work is heavy. It is an accelerant for what already exists: faster drafting, faster synthesis, faster preparation, faster internal response. This is real productivity, and in many institutions it is long overdue.

An AI operating model produces outcomes under constraint. It is defined by decision paths, approvals, controls, exception routes, and system updates that must happen in a specific sequence and remain defensible. In regulated industries, operating models exist to protect the institution: they create consistency, enforce controls, and ensure traceability. They are not optimized for speed; they are optimized for reliability. You can improve speed but only by improving execution inside those constraints, not by pretending the constraints don’t exist.

This contrast matters because most “AI transformations” are stuck on the wrong side of it. They measure adoption and content productivity, then wonder why onboarding cycle time didn’t budge. They celebrate faster drafting, then wonder why underwriting decisions still get delayed by the same missing information and the same rework loops. They ask for ROI while the institution’s core machine remains untouched.

If you want mission-critical gains, the target cannot be “better answers.” The target must be cleaner execution.

A real world example: commercial onboarding and the cost of ambiguity

Commercial onboarding is the perfect example because it reveals where copilots help and where they don’t. Most leaders assume onboarding is slow because the process involves a lot of documents. In practice, onboarding is slow because documents create ambiguity, and ambiguity creates loops.

The rework rarely comes from one big failure. It comes from dozens of small inconsistencies: a beneficial owner’s details don’t match across documents, a board resolution is missing an annex, signatory powers require a second signature that wasn’t captured, addresses conflict between sources, or a document technically exists but is outside a validity window.

Each issue triggers a clarification request. Each request adds time. Each delay creates internal churn, because relationship managers, compliance teams, onboarding operations, and sometimes legal end up revisiting the same file from different angles. By the time the customer feels “the bank is slow,” the bank has already paid the tax in labor, escalation, and operational risk.

A copilot can make that work less painful. It can draft the ask-back email faster. It can summarize what’s outstanding. It can rewrite customer-facing messages in approved language. It can help teams explain what’s happening to stakeholders. Those are real benefits, and in a high-volume environment they add up.

But copilots do not, by default, remove the bottleneck, because the bottleneck is not phrasing. The bottleneck is ambiguity management. If the organization wants onboarding to move faster without weakening controls, the process must resolve ambiguity early and systematically. That means the system needs to do more than communicate; it needs to validate, cross-check, detect missing items precisely, and route exceptions correctly with evidence attached.

When leaders say they want onboarding to move from weeks to days, what they are really asking for is a different execution pattern: one where the institution stops discovering problems late. That is not a productivity problem. It is an operating model problem.

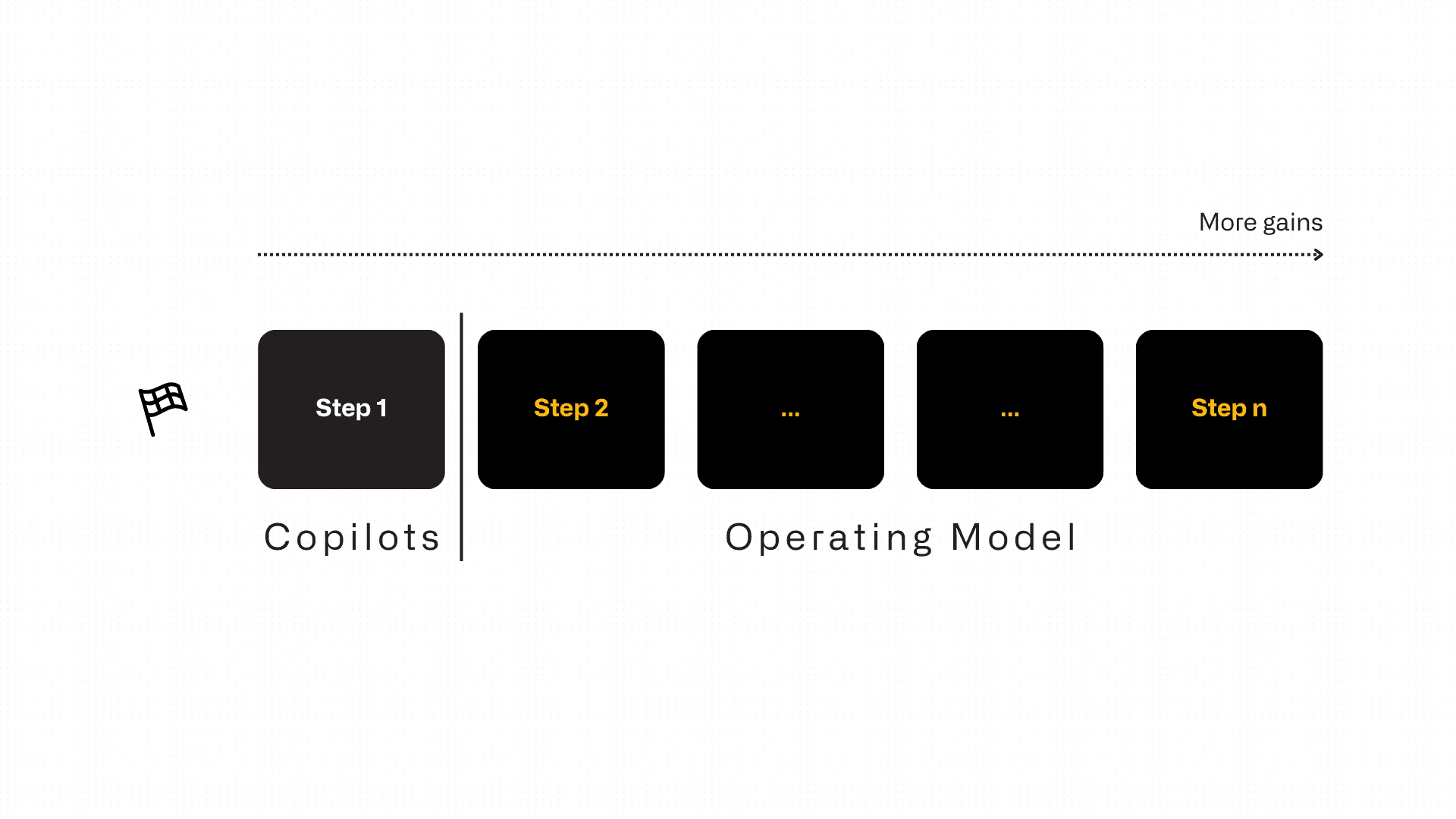

The strategic takeaway: copilots are the front door, not the engine

This is the point many institutions miss because it’s more comfortable to talk about tools than to talk about operating models. Copilots are a valuable front door: they normalize AI, they create adoption, and they raise the productivity floor across teams. But core value streams do not run on front doors. They run on controlled execution behind the door: processes that survive audit, identity models that enforce entitlements, evidence trails that can be reconstructed, and reliability under operational stress.

If your ambition is limited to productivity, “we already have copilots” can be a reasonable stopping point. If your ambition is to compress decision cycles, reduce operational leakage, and scale execution inside regulated workflows, then “we already have copilots” is simply the beginning of the serious conversation.

The rest of this series is designed for that conversation. In the next part, we will name the real gap executives run into when they ask AI to do more than assist: the control deficit. The difference between a helpful assistant and mission-critical AI is not eloquence. It is controllability; governed behavior, evidence, identity, oversight, and reliability under pressure.

Once you understand that deficit, you can stop arguing about assistants and start building an execution capability that produces outcomes a regulated institution can stand behind.

Resources

Bucharest

Charles de Gaulle Plaza, Piata Charles de Gaulle 15 9th floor, 011857 Bucharest, Romania

San Mateo

352 Sharon Park Drive #414 Menlo Park San Mateo, CA 94025

© 2025 FlowX.AI Business Systems