Back

Governance

Productivity

Business Flows

Accountability

–

The Control Deficit: Why AI Copilots Fail The Moment They Touch Real Work

Reader’s Map, a 4-Part Article Series

Why “we already have Copilots” is true, and still strategically incomplete. Copilots raise productivity; they do not, by themselves, create an execution capability that can safely move regulated work through the institution (Part 1 of 4)

The control deficit. The difference between a helpful assistant and mission-critical AI is not eloquence; it is controllability: governed behavior, evidence, identity, oversight, and reliability under operational stress (Part 2 of 4)

A practical definition of “mission-critical AI.” Five tests that separate AI you can demo from AI you can deploy into regulated value streams (Part 3 of 4)

Use case: commercial onboarding as an agentic flow. Not “AI that drafts emails,” but AI that closes files cleanly, catches exceptions early, and leaves audit-ready evidence behind (Part 4 of 4)

This is Part 2 of 4, the control deficit.

Introduction

In regulated businesses, the gap isn’t intelligence. It’s controllability: evidence, identity, oversight, and reliability under stress.

There’s a particular kind of failure that only shows up once AI stops being a slide and starts being a system. In the pilot phase, everything looks clean: the agent is impressive, the demo is smooth, and the outputs feel “good enough” to inspire confidence. Then somebody asks for the AI to participate in a real value stream, something that touches onboarding, credit, claims, servicing, or compliance, and the conversation changes immediately. The questions get sharper. The risk posture gets real. The enthusiasm becomes conditional. That pivot is not politics. It’s more like physics.

When AI is used as a copilot, it lives in the world of assistance: it suggests, drafts, summarizes, and helps people move faster. When AI is used to execute, it enters the world of accountability: actions must be permissioned, decisions must be defensible, exceptions must be handled, and outcomes must be traceable.

The cost of being wrong stops being “a mediocre paragraph” and becomes customer harm, financial exposure, or a control failure. That is why so many “agentic transformations” stall right where leadership wants them most, in the workflows that actually move money and risk.

This is the central reality Part 2 is about: the control deficit. It is the gap between what AI is good at and what regulated operations require. Most organizations don’t lose momentum because the model can’t produce an answer. They lose momentum because they can’t prove the system is safe to act.

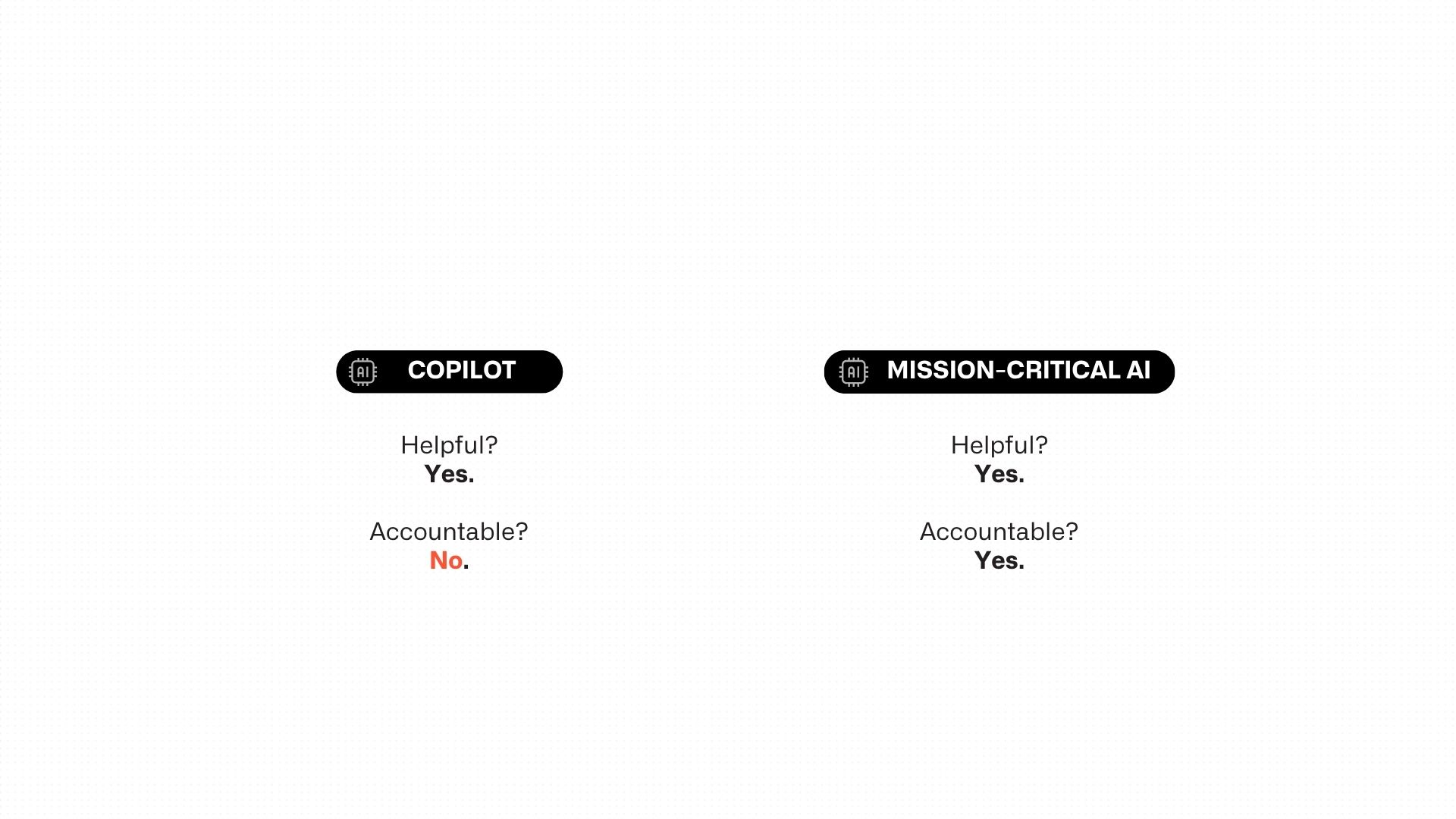

The control deficit in one picture

If you only remember one thing from this series, make it this.

AI Copilots are… | Mission-Critical AI is… |

|---|---|

Helpful | Accountable |

Helpful systems are judged by usefulness. | Accountable systems are judged by controllability. |

A helpful assistant can be evaluated by whether it accelerates a human. An accountable system must be evaluated like infrastructure: whether it behaves predictably at scale, whether it operates within permissions, whether it leaves evidence behind, and whether it degrades safely under stress.

That difference is not philosophical. It determines whether you can deploy AI into your core value streams, or keep it confined to productivity use cases.

Why copilots plateau in regulated work

Leaders often interpret the plateau incorrectly. They’ll say, “We need better prompts,” or “We need a smarter model,” or “We need more training.” In reality, the plateau is structural. It happens because copilots are designed to assist humans inside a knowledge environment, while regulated workflows demand a system that can carry accountability. The gap is not one feature; it is a different design center.

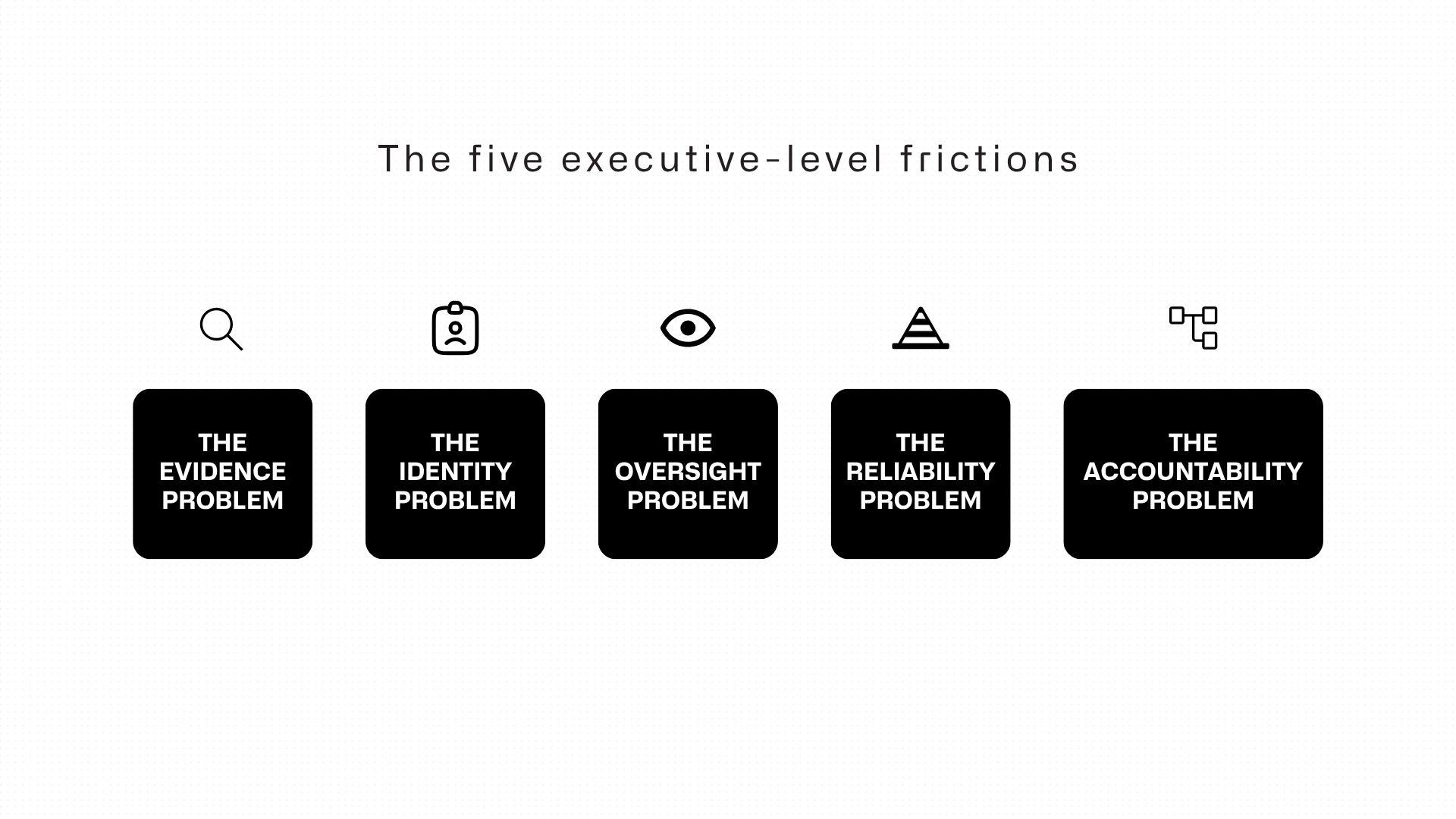

The control deficit typically shows up as five executive-level frictions. They’re not technical complaints; they’re operational red flags.

1) The evidence problem: “It sounds right” isn’t admissible.

In regulated work, you don’t get credit for sounding confident. You get credit for being verifiable.

The moment AI output influences a decision, the next question is not “is this helpful?” It’s “show me the evidence.” Where did this data come from? Which source-of-truth record did it use? What policy did it apply? What clause or rule supports the conclusion? Can a reviewer click through and verify it quickly?

If the system can’t answer those questions cleanly, it will be treated like an untrusted narrator. The institution will wrap humans around it until it becomes slower than the process it was meant to improve.

This is the first place copilots often disappoint leaders: they generate plausible narrative, but they don’t naturally produce evidentiary artifacts. Mission-critical AI must be designed to produce structured outputs with provenance.

2) The identity problem: “Who is the agent, exactly?”

Every regulated institution has an authority model, even if it’s messy: role-based access, entitlements, segregation of duties, approval hierarchies. That model exists because the institution learned, over decades, that authority is a risk surface.

Copilots typically operate in a user’s context. That works for assistance. It becomes dangerous for execution, because execution requires clarity about whose authority is being exercised at each step. If an agent pulls data from one system, updates another, triggers a workflow, and generates communications, the organization must know, precisely, under what identity that occurred, with what entitlements, and with what approvals. Otherwise, the institution has created an automation that cannot be governed.

When leaders hear “agentic automation,” they imagine speed. Risk leaders hear “shadow identity.” If you cannot explain the agent’s authority model in plain language, you have not built mission-critical AI; you have built a compliance argument waiting to happen.

3) The oversight problem: “Where do humans intervene, and why there?”

The phrase “human in the loop” is often treated as a checkbox. In reality, the question is more serious: which humans, intervening at which points, with what evidence, under what SLA?

In regulated value streams, oversight cannot be generic. It must be designed. Humans need to be placed where judgment is required and risk is concentrated, not forced to micromanage every step because the system isn’t trustworthy. If every action requires a human to “review just in case,” the institution has not automated anything; it has created a new review layer.

Mission-critical AI doesn’t eliminate humans. It eliminates unnecessary human labor while strengthening human control at the moments that matter.

4) The reliability problem: “Does it still work when the day is ugly?”

Demos live on happy paths but operations do not.

The day is ugly when documents are missing, data conflicts, a downstream system times out, or volume spikes at the worst possible moment. The day is ugly when a customer’s legal structure is complex, when a policy edge case appears, when a regulator asks for traceability, or when a security team needs to understand the blast radius of an automated action.

In that environment, AI must behave less like a creative assistant and more like a production system: predictable failure handling, safe degradation, explicit exception routes, and observability that lets the business see what’s happening, not after the fact, but as it unfolds.

Most “smart” AI fails here because it wasn’t built to carry operational stress. That’s not a model issue, it’s an engineering and governance issue.

5) The accountability problem: “Can we defend this six months from now?”

The harsh truth of regulated work is that decisions have a long tail. A credit decision can be challenged. An onboarding file can be audited. A claims action can become litigation. A compliance action can be revisited under a new interpretation.

Mission-critical AI must therefore be designed for delayed scrutiny. It must produce artifacts that remain intelligible later: a reconstruction of what happened, when it happened, why it happened, and who approved what. If you cannot replay the decision path months later, you do not have mission-critical AI. You have automated opacity.

Short contrasts that clarify the gap

You don’t need jargon. You need contrasts that hold up in board conversations.

Drafting vs. deciding. Copilots draft faster; mission-critical AI decides within policy and produces proof.

Narrative vs. evidence. Copilots generate narrative; mission-critical AI anchors every claim to verifiable sources.

User context vs. institutional authority. Copilots act like a user; mission-critical AI acts inside roles, entitlements, and approvals.

Helpful under supervision vs. safe under pressure. Copilots are helpful when watched; mission-critical AI remains safe when the day is ugly.

Outputs vs. outcomes. Copilots produce text; mission-critical AI moves a case forward without creating risk.

If your AI system cannot sit comfortably on the right-hand side of these contrasts, leadership will keep it on the left-hand side, no matter how impressive the demo looks.

Example: where the control deficit shows up in a real value stream

Consider a familiar scenario: commercial onboarding. A leadership team wants cycle time down because onboarding delay is revenue delay, and revenue delay is a self-inflicted wound. Someone proposes, “Let’s deploy copilots to the onboarding team. They’ll handle the communications, summarize cases, and speed up the process.”

That will improve the experience of the team. It will not, by itself, change the economics of onboarding. The reason is simple: onboarding delays are not primarily caused by slow writing. They are caused by late discovery of ambiguity, missing annexes, inconsistent ownership details, signatory powers that don’t match, documents outside validity windows, contradictions between the customer’s submissions and the institution’s records.

A copilot can help an analyst explain what’s missing. It does not automatically detect what’s missing early, cross-check it systematically, route exceptions correctly, and produce a file that a compliance reviewer can approve without a back-and-forth.

This is the control deficit in its most practical form: when AI is asked to touch regulated work, leaders need it to behave like an execution system. If it behaves like an assistant, the organization will wrap controls around it until the speed advantage disappears.

The control deficit is solvable, but only if you treat it as the core problem

Many organizations try to solve the control deficit with governance documents and training. Those are important, but they won’t carry the burden alone. The control deficit is not a policy gap. It is an architectural gap. It must be closed with mechanisms: evidence trails, identity and permissions, human checkpoints by design, and reliability that survives operational stress.

That is why Part 3 of this series exists.

In the next piece, we’ll stop talking about “mission-critical AI” as a buzzword and define it in a way leadership can actually use: five tests that separate AI you can demo from AI you can deploy. Each test will be written as a simple definition, a blunt disqualifier line, and a concrete example, because the fastest way to cut through AI noise is to give leaders a standard they can enforce.

If you can pass those five tests, copilots become a front door to real operational leverage. If you can’t, you’ll still have impressive AI but it will live where risk is low and value is limited.

Resources

Bucharest

Charles de Gaulle Plaza, Piata Charles de Gaulle 15 9th floor, 011857 Bucharest, Romania

San Mateo

352 Sharon Park Drive #414 Menlo Park San Mateo, CA 94025

© 2025 FlowX.AI Business Systems